The ICO has said that Global Witness can claim the data protection exemption for journalism, regarding their investigations in BSGR. This fascinating case continues to raise difficult and important questions.

Data protection law rightly gives strong protection to journalism; this is something that the 2012 Leveson inquiry dealt with in considerable detail, but, as the inquiry’s terms of reference were expressly concerned with “the press”, with “commercial journalism”, it didn’t really grapple with the rather profound question of “what is journalism?” But the question does need to be asked, because in the balancing exercise between privacy and freedom of expression too much weight afforded to one side can result in detriment to the other. If personal privacy is given too much weight, freedom of expression is weakened, but equally if “journalism” is construed too widely, and the protection afforded to journalism is consequently too wide, then privacy rights of individuals will suffer.

In 2008 the Court of Justice of the European Union (CJEU) was asked, in the Satamedia case, to consider the extent of the exemption from a large part of data protection law for processing of personal data for “journalistic” purposes. Article 9 of the European Data Protection Directive (the Directive) provides that

Member States shall provide for exemptions or derogations…for the processing of personal data carried out solely for journalistic purposes or the purpose of artistic or literary expression only if they are necessary to reconcile the right to privacy with the rules governing freedom of expression.

and recital 37 says

Whereas the processing of personal data for purposes of journalism or for purposes of literary of artistic expression, in particular in the audiovisual field, should qualify for exemption from the requirements of certain provisions of this Directive in so far as this is necessary to reconcile the fundamental rights of individuals with freedom of information and notably the right to receive and impart information

In Satamedia one of the questions the CJEU was asked to consider was whether the publishing of public-domain taxpayer data by two Swedish companies could be “regarded as the processing of personal data carried out solely for journalistic purposes within the meaning of Article 9 of the directive”. To this, the Court replied “yes”

Article 9 of Directive 95/46 is to be interpreted as meaning that the activities [in question], must be considered as activities involving the processing of personal data carried out ‘solely for journalistic purposes’, within the meaning of that provision, if the sole object of those activities is the disclosure to the public of information, opinions or ideas [emphasis added]

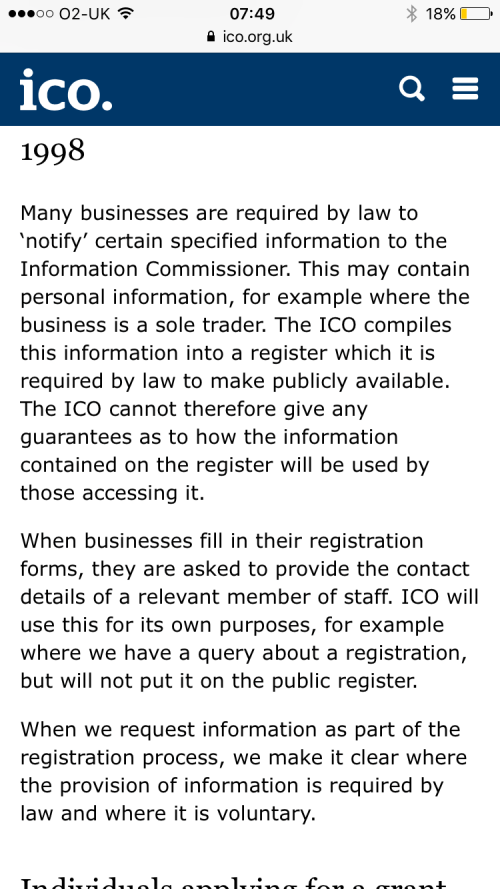

One can see that, to the extent that Article 9 is transposed effectively in domestic legislation, it affords significant and potentially wide protection for “journalism”. In the UK it is transposed as section 32 of the Data Protection Act 1998 (DPA). This provides that

Personal data which are processed only for the special purposes are exempt from any provision to which this subsection relates if—

(a)the processing is undertaken with a view to the publication by any person of any journalistic, literary or artistic material,

(b)the data controller reasonably believes that, having regard in particular to the special importance of the public interest in freedom of expression, publication would be in the public interest, and

(c)the data controller reasonably believes that, in all the circumstances, compliance with that provision is incompatible with the special purposes.

where “the special purposes” are one or more of “the purposes of journalism”, “artistic purposes”, and “literary purposes”. Section 32 DPA exempts data processed for the special purposes from all of the data protection principles (save the 7th, data security, principle) and, importantly from provisions of sections 7 and 10. Section 7 is the “subject access” provision, and normally requires a data controller, upon receipt of written request by an individual, to inform them if their personal data is being processed, and, if it is, to give the particulars and to “communicate” the data to the individual. Section 10 broadly allows a data subject to object to processing which is likely to cause substantial damage or substantial distress, and to require the data to controller to cease (or not begin) processing (and the data controller must either comply or state reasons why it will not). Personal data processed for the special purposes are, therefore, exempt from subject access and from the right to prevent processing likely to cause damage or distress. It is not difficult to see why – if the subject of, say, investigative journalism, could find out what a journalist was doing, and prevent her from doing it, freedom of expression would be inordinately harmed.

The issue of the extent of the journalistic data protection exemption came into sharp focus towards the end of last year, when Benny Steinmetz and three other claimants employed by or associated with mining and minerals group Benny Steinmetz Group Resources (BSGR) brought proceedings in the High Court under the DPA seeking orders that would require campaigning group Global Witness to comply with subject access requests by the claimants, and to cease processing their data. The BSGR claimants had previously asked the Information Commissioner’s Office (ICO), pursuant to the latter’s duties under section 42 DPA, to assess the likelihood of the lawfulness of Global Witness’s processing, and the ICO had determined that it was unlikely that Global Witness were complying with their obligations under the DPA.

However, under section 32(4) DPA, if, in any relevant proceedings, the data controller claims (or it appears to the court) that the processing in question was for the special purposes and with a view to publication, the court must stay the proceedings in order for the ICO to consider whether to make a specific “special purposes” determination by the ICO. Such a determination would be (under section 45 DPA) that the processing was not for the special purposes nor was it with a view to publication, and it would result in a “special information notice”. Such a stay was applied to the BSGR proceedings and, on 15 December, after some considerable wait, the ICO conveyed to the parties that it was “satisfied that Global Witness is only processing the personal data requested … for the purposes of journalism”. Accordingly, no special information notice was served, and the proceedings remain stayed. Although media reports (e.g. Guardian and Financial Times) talk of appeals and tribunals, no direct appeal right exists for a data subject in these circumstances, so, if as seems likely, BSGR want to revive the proceedings, they will presumably either have to apply to have the stay lifted or/and issue judicial review proceedings against the ICO.

The case remains fascinating. It is easy to applaud a decision in which a plucky environmental campaign group claims journalistic data protection exemption regarding its investigations of a huge mining group. But would people be so quick to support, say, a fascist group which decided to investigate and publish private information about anti-fascist campaigners? Could that group also gain data protection exemption claiming that the sole object of their processing was the disclosure to the public of information, opinions or ideas? Global Witness say that

The ruling confirms that the Section 32 exemption for journalism in the Data Protection Act applies to anyone engaged in public-interest reporting, not just the conventional media

but it is not immediately clear from where they import the “public-interest” aspect – this does not appear, at least not in explicit terms, in either the Directive or the DPA. It is possible that it can be inferred, when one considers that processing for special purposes which is not in the public interest might constitute an interference with respect for data subjects’ fundamental rights and freedoms (per recital 2 of the Directive). And, of course, with talk about public interest journalism, we walk straight back into the arguments provoked by the Leveson inquiry.

Furthermore, one notes that the Directive talks about exemption for processing of personal data carried out solely for journalistic purposes, and the DPA says “personal data which are processed only for the special purposes are exempt…”. This was why I emphasised the words in the Satamedia judgment quoted above, which talks similarly of the exemption applying if the “sole object of those activities is the disclosure to the public of information, opinions or ideas”. One might ask whether a campaigning group’s sole or only purpose for processing personal data is for journalism. Might they not, in processing the data, be trying to achieve further ends? Might, in fact, one say that the people who engage solely in the disclosure to public of information, opinions or ideas are in fact those we more traditionally think of in these terms…the press, the commercial journalists?

P.S. Global Witness have uploaded a copy of the ICO’s decision letter. This clarifies that the latter was satisfied that the former was processing for the special purposes because it was part of “campaigning journalism” even though the proposed future publication of the information “forms part of a wider campaign to promote a particular cause”. This chimes with the ICO’s data protection guidance for the media, but it will be interesting if it is challenged on the basis that it doesn’t support a view that the processing is “only” or “solely” for the special purposes.

The views in this post (and indeed all posts on this blog) are my personal ones, and do not represent the views of any organisation I am involved with.